Fragmentation kills Alpha

I had to do a 5min presentation on Alpha for Future Alpha.

And so I thought about what would be somewhat controversial, but directionally correct.

This is the origin of this post. So let's dive in.

Alpha can be described as: Excess return beyond compensated risk.

That's it.

And it exists because markets are imperfect and someone on the other side is slower, constrained, emotional and/or structurally disadvantaged.

When you break it down, alpha comes from a handful of mechanisms:

-

Information gaps - interpreting supply chain data or satellite imagery before it shows up in earnings revisions.

-

Structural constraints - banks forced to sell assets due to capital rules. Index funds forced to rebalance at predictable times.

-

Behavioral mistakes - panic selling during drawdowns. Crowded narrative trades that disconnect from fundamentals.

-

Factor dislocations - value or momentum temporarily breaking because the economic regime shifted and models haven't caught up.

-

Domain asymmetry - a healthcare specialist understanding clinical trial nuance that the generalist PM across the street simply can't see.

These have been the foundation of active management for decades.

Most of the time mostly with data + human insight, but now we need to think about AI impact into these.

Reading filings faster? Commoditized.

Parsing earnings transcripts? Commoditized.

Running basic sentiment analysis? Automated.

Factor detection? Automated.

When Ken Griffin said last year that generative AI "falls short" for uncovering alpha at Citadel, I think the problem wasn't that AI can't help generate alpha. The problem is that most firms are plugging AI into a fragmented stack.

I actually wrote about this at the time here.

AI models are accessible. Data is abundant. If everyone has access to the same LLMs and the same vendors, then access is no longer the moat.

So what is?

Everyone is looking to have that being AI - which can be.

But AI is an amplifier, is amplifies whatever you already have.

If your infrastructure is fragmented, AI accelerates fragmentation.

If your infrastructure is unified, it's a different story...

The intersection that matters

The current constraint, in my opinion, is infrastructure.

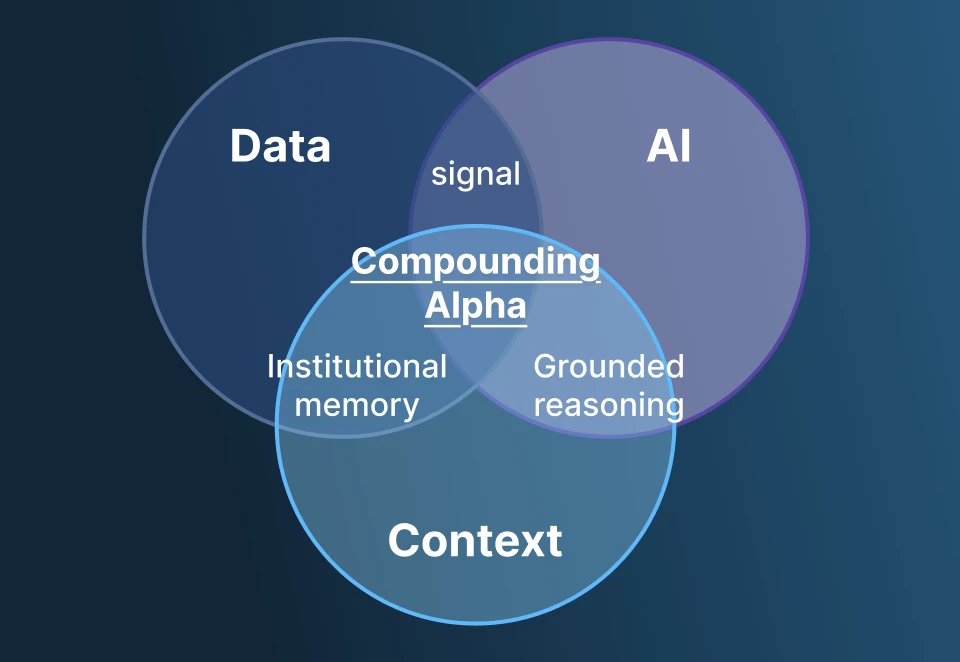

If you reduce modern alpha generation to first principles, it lives at the intersection of data, AI and context.

Each intersection creates something distinct.

-

Signal (Data x AI): When AI refines raw data, you get feature extraction, pattern recognition, forecasts. This is where 90% of the industry is focused right now. But signal alone decays fast.

-

Grounded Reasoning (AI x Context): AI without context is generic - it misses constraints and gives you answers that are smart but ignore your mandate, your risk limits, your prior research. This is the evolution from a generic chat response, reasoning that understands how your firm actually thinks and operates.

-

Institutional Memory (Data x Context): Data inside context becomes something persistent. Research becomes traceable. Experiments become reproducible. Decisions become auditable. The firm starts to learn across time and across teams.

When all three converge - data, AI, and context working together inside the same environment - you get a system that learns.

The operating reality

Most firms don't operate this way.

Data lives in a browser. AI lives in a separate chat tool. Research context is scattered across Teams chats, PowerPoint decks, Excel models, and someone's OneNote.

Decisions get made in one system and documented (maybe) in another. The underlying reasoning - why this trade, why this allocation, why this risk call - disappears.

And every day, the firm starts from 0.

It produces insights. But it does not accumulate intelligence.

This is what I mean by fragmentation killing alpha.

The firm generates signal but never compounds it. AI gets layered on top as an after thought, and never in a way designed to compound these insights.

Turns out that AI needs as much Context as it needs raw data, maybe even more?

Designing for compounding

If fragmentation destroys compounding, the next-generation firm has to be designed differently.

Not another tool on top of the stack.

An environment where the stack collapses into one thing.

A workspace where all proprietary and vendor data lives inside your controlled infrastructure. Where AI reasons within your mandate and risk constraints - not in a generic chat window disconnected from everything. Where every query, model run, and decision is logged and reproducible. Where research context persists beyond email. Where agents automate repeatable workflows without exporting intelligence outside your walls. Where governance is native - SSO, RBAC, audit trails built into the system, not bolted on after the fact.

In that environment, the organization remembers. Every hypothesis improves the system. Every decision refines the reasoning layer. Every research cycle strengthens the firm's edge.

Infrastructure is the edge

In an AI-native world, you don't want to rent intelligence from fragmented tools, you are better off owning the infrastructure that compounds it.

This is what we're building at OpenBB. Not another financial workspace with data incentives. But also not an AI copilot.

Infrastructure where institutional alpha exists, and can compound.